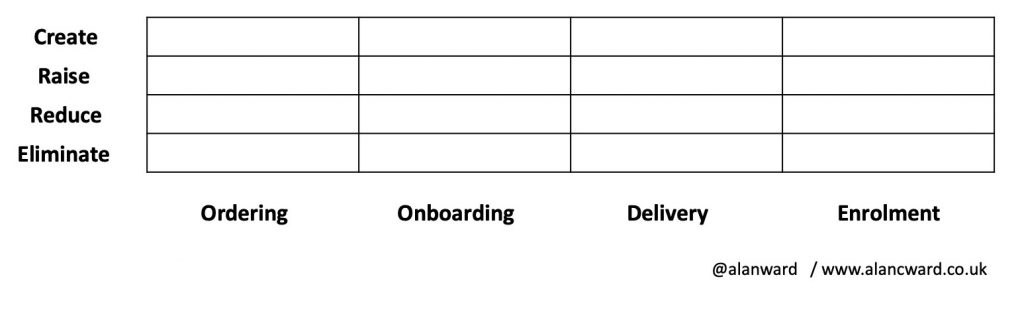

ERRC as an 8 box model

Blue Ocean Strategy includes a concept of an ERRC diagram to cover the following strategic decisions: Eliminate Reduce Raise Create Graph? It’s usually depicted as a linear graph where the authors of each ERRC decide which of the features of their product (or indeed which products) should fall into which section of the graph. The…

Read more

Recent Comments